Lisa

An AI speech analytics solution tool that helps Quality Assurance Managers and Officers improve efficiency in compliance monitoring on inbound and outbound calls at call centers.

For businesses and users who want to automate compliance processes and optimise agent performance, Lisa is a transcribing business and intelligence tool that provides actionable insights, automated QA scoring and coaching opportunities.

Business Problem

Businesses are incurring high costs to monitor customer channels for compliance breaches, as well as maintaining a consistent customer experience, but cannot hope to listen and act on every conversation

Call Centers Quality Management processes suffer evaluation bias and are inefficient for management and agent feedback. Only 2 to 5% of calls are ever reviewed, leaving businesses open to the risk of rogue agents, unqualified sales and regulatory fines

Insufficient QM scorecards — the scorecards used for Quality Management by Managers and Supervisors tend to cover a limited number of conversational factors and very little on other relevant areas such as sales, brand and the ever-increasing & changing demands of regulatory compliance

User Problem

Quality Assurance Managers and Officers experienced inefficiency due to the multiple disparate systems they used to aggregate data, provide feedback to call agents and to prepare training materials

It is vital for these users to receive Immediate alerts when a compliance breach has occured to reduce the regulatory risks committed by call agents. The current process adopted by call centres is manual — by word of mouth or evaluation at a later time causing delays in remediation

Agent churn and inexperience can mean customers can receive a very inconsistent experience. Surfacing QA trends such as patterns in agent behaviours and script adherence to inform on actionable insights prove to be difficult. QA Managers and Officers want to use these trends and insights to to train call agents for improvement but face the daunting task of evaluating hundreds of calls and the arduous process of detective work

The Challenge

The existing product built in Qlik built by Daisee was not deployable and could not be integrated into the call centres’ current systems.

The Qlik product also did not meet the targeted personas namely Quality Assurance Managers, Team Leaders and Officers.

Data shown was overwhelming and requires drill down to several levels of information. This causes cognitive overload and more detective work.

Daisee faced the challenge of producing a deployable product within 4 weeks in order to raise investments and to showcase the product to potential clients. At the same time the lack of Product Management, cross-functional and agile processes hindered acceleration in the development team.

The Approach

I planned and carried out user research to identify user needs and problems — in-depth interviews, first impressions on existing Qlik product.

The synthesis of research informs on information architecture, user stories, ux and ui designs. Concurrently I evangelised and implemented the process of Product Management and cross-functional collaboration at Daisee.

User Research

I conducted User Research through in-depth Interviews with early adopters and potential clients. I also gathered feedback on clients’ first impressions of the Qlik prototype Daisee had created prior to my tenure.

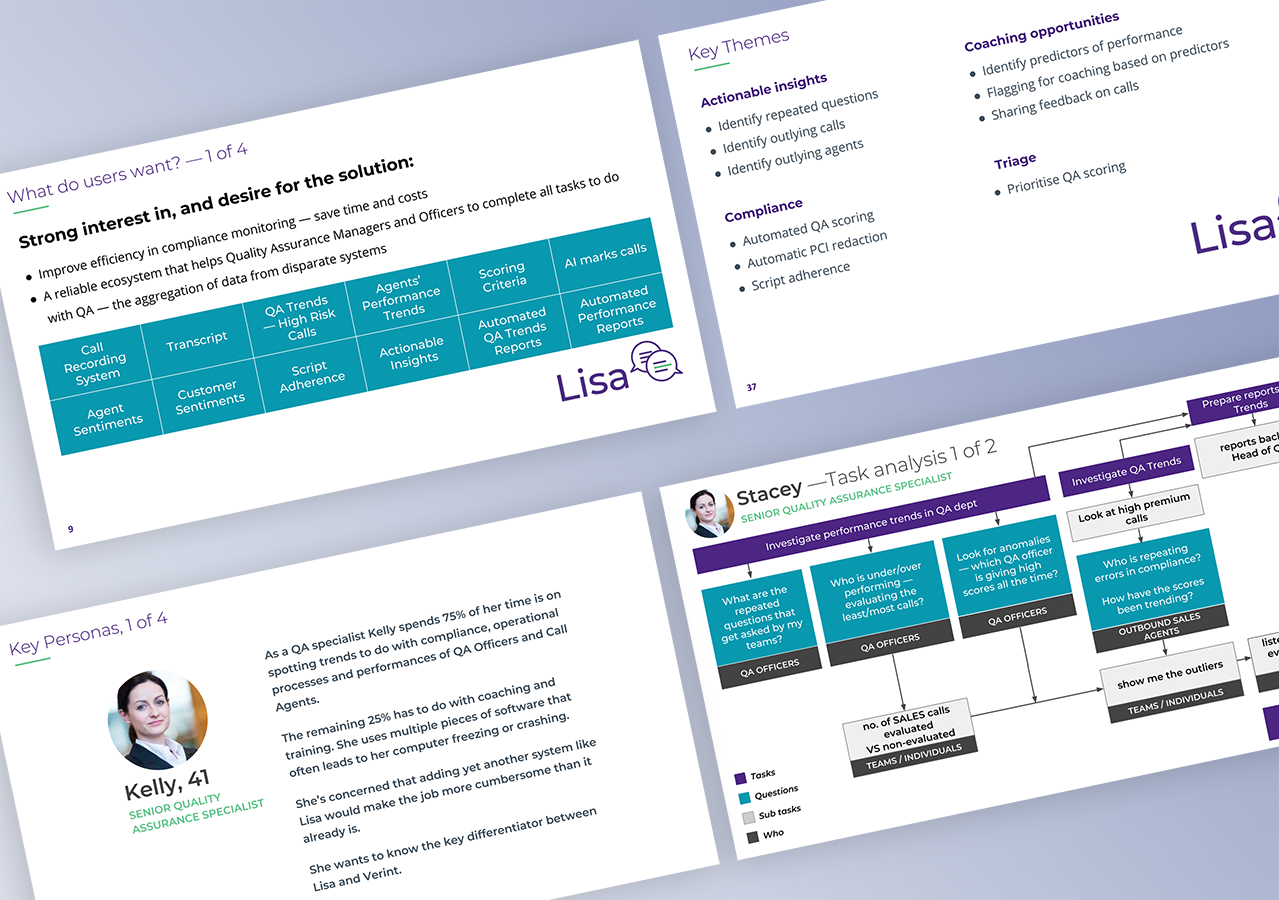

I synthesized the findings and hired a freelancer (Daniel Benhar) to help speed up this process. The outputs included details of the personas; key user problems and needs; task analysis; impediments to users wanting to adopt the product.

This synthesis was then communicated and presented to the leadership and stakeholders to align everyone’s common understanding of what the customers were looking for and how we could solve their problems with Lisa.

User needs for a Senior Quality Assurance Specialist

Run a report after a week's worth of sales or cancellation. To feed in QA trends with business outcomes

Analyse agent performance

Key word logic searches

Measure customer satisfaction

Drill down to spot best practice and training needs on an agent-by-agent basis, or across a whole business

User needs for a QA Team Leader

Listen to calls, review transcription

Understand semantics and meaning

Distinguish between over-talk and interruptions

Use for spotting best practice & assessing training needs

Key Themes

The key themes that emerged out of the user research helped inform on features to be built for businesses and users.

Based on this, the user needs, and task analysis, I proceeded to building the information architecture.

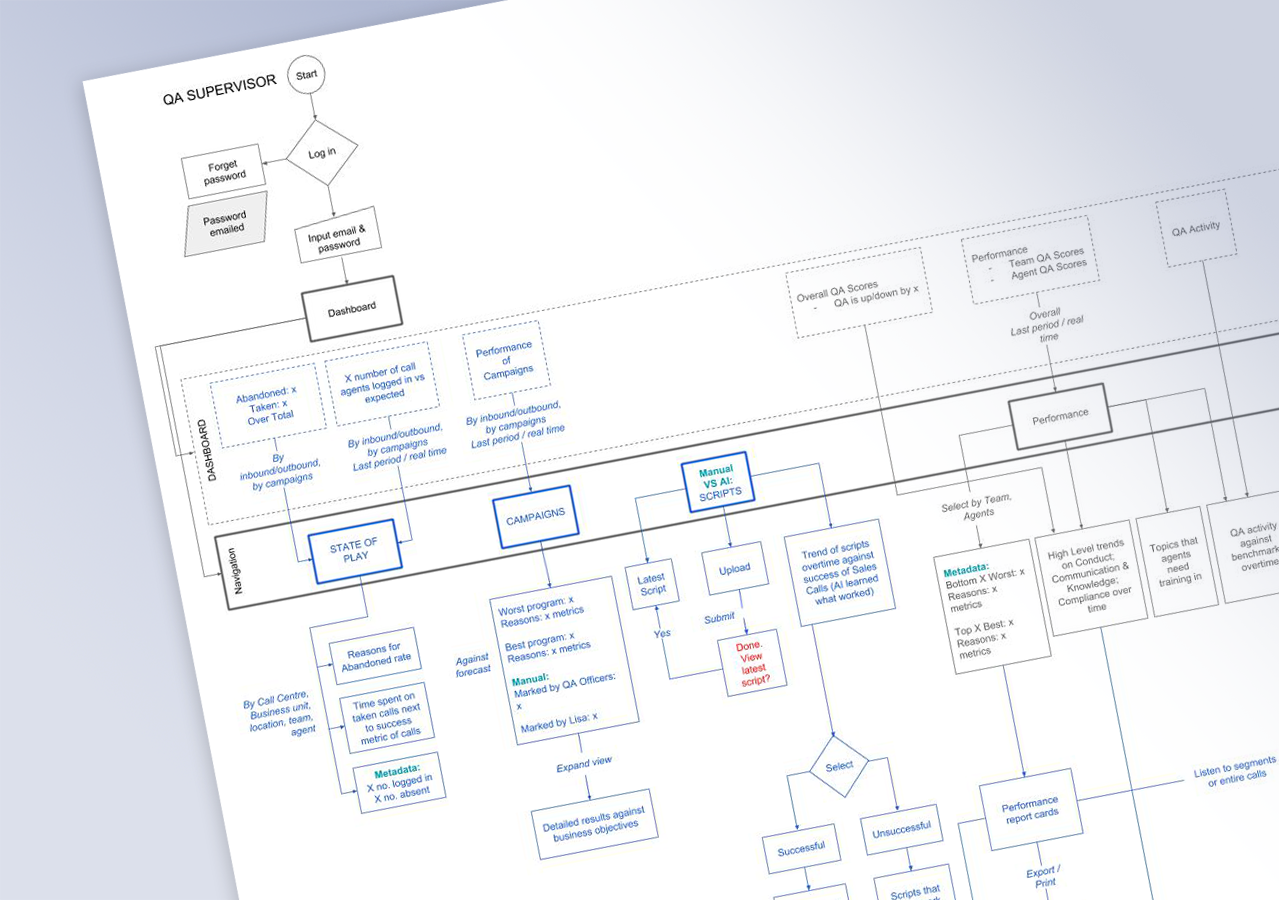

Information Architecture

The information architecture (IA) detailed the future state (items coded in blue) as well as MVP features (black items) that were based on what existed in the kernel, and the high impact low effort items identified by the software engineers and Data Scientists.

The purpose of building the IA prior to diving into UI designs was to provide stakeholders a bird eye’s view of the entire user flow, to drive discussion on iterations. In having this process, the user experience could be improved quickly and reduced the recurrence of rework at the User Interface Design stage, thus accelerating the build of high-fidelity UI designs within compressed timeline.

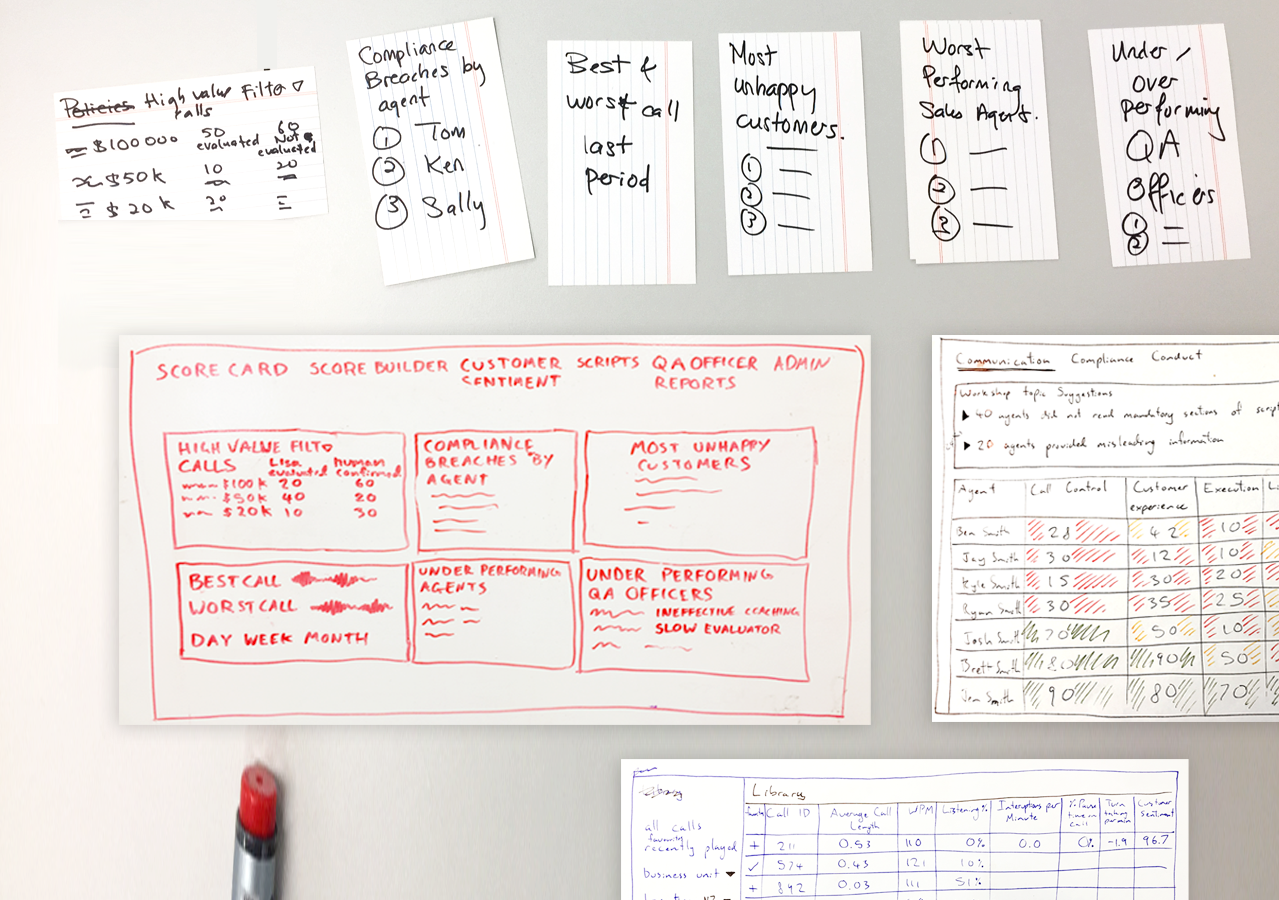

Low-Fidelity Sketches

Based on the user research and IA, the freelancer (Daniel Benhar) and I worked together to sketch out the screens. Inputs and ideas were gathered from the cross-functional team, whilst presenting the design rationale based on UI design principles and ux research.

Low-fidelity designs helped the team focus more on high-level concepts of the product than just implementation. Instead of emphasizing on the visual detailed outlook of the final product, lo-fi prototyping gives more focus on macro design concepts. It also allows for instant iteration, a cost and time saving method to translate ideas into visualised prototypes.

High-Fidelity Designs

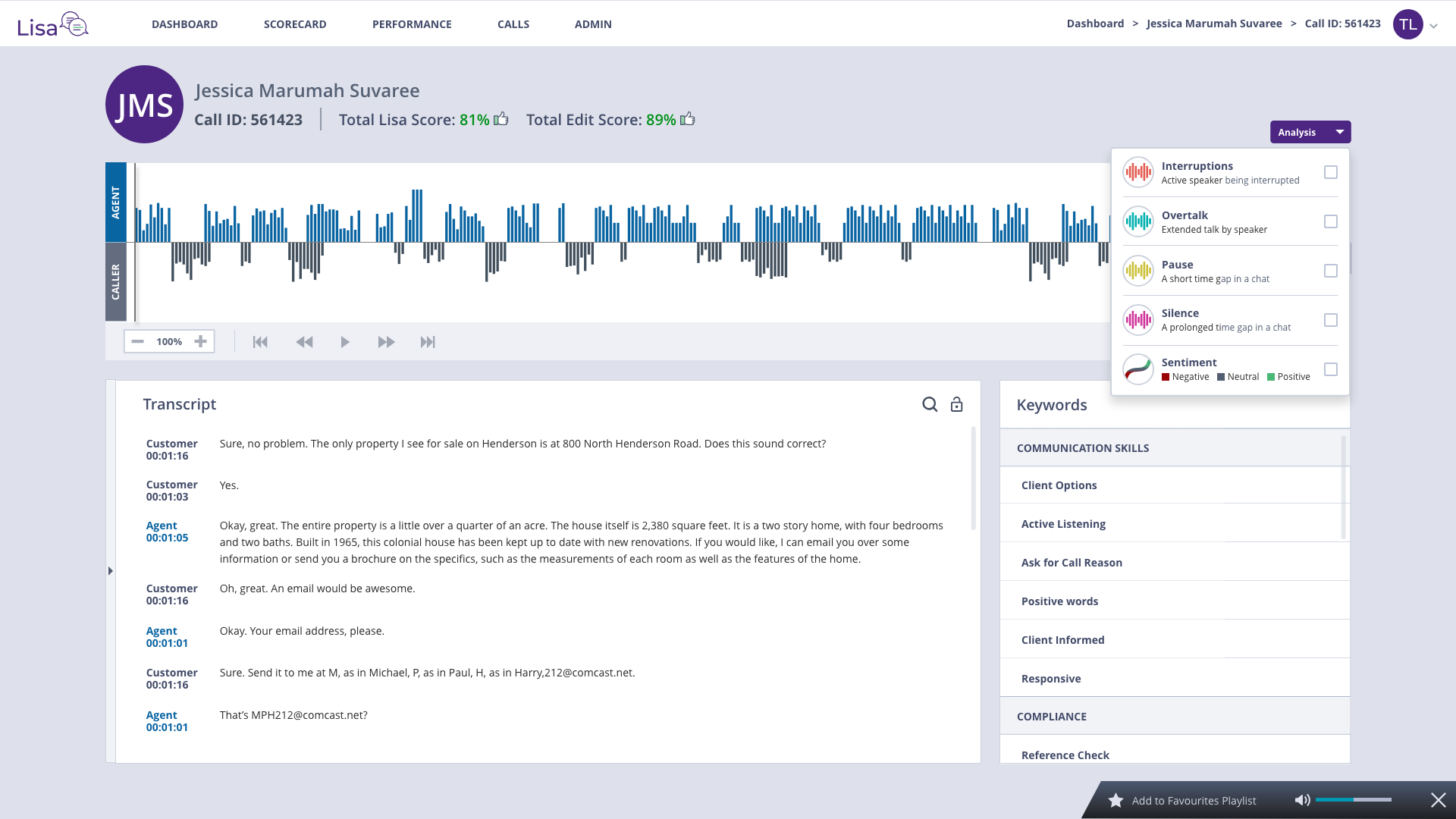

Using the low-fidelity sketches, I proceed to focus more on details and functions of the final MVP product such as web/app elements, colors, styles, functions, interactions, and animations. In the process often referring back to the IA to check on efficiency in user flows and iterate both the IA and designs in the process.

Usability test questions are then planned to test the feasibility of the designs to discover possible issues.

Scenario 1: Check on high-risk calls

Stacey, the QA Specialist and Thomas, a QA Team Leader both investigate into high premium calls to do with their products. Because the product is of high value — a Living Insurance Policy worth $150k, a risk resulting in paying fines would occur if Call Agents had committed a compliance breach.

Stacey and Thomas log into Lisa and immediately check on the “High Risk Calls” .The calls indicated with the “Critical Risk” icon indicates those were abnormalies they would want to investigate into, eg a Call Agent could score high in their call but still commit compliance breach. Stacey and Thomas also investigate into training opportunities and agent performance to improve the performance. The video below showcases this scenario.

Scenario 2: View analysis of the best call and use it for training agents.

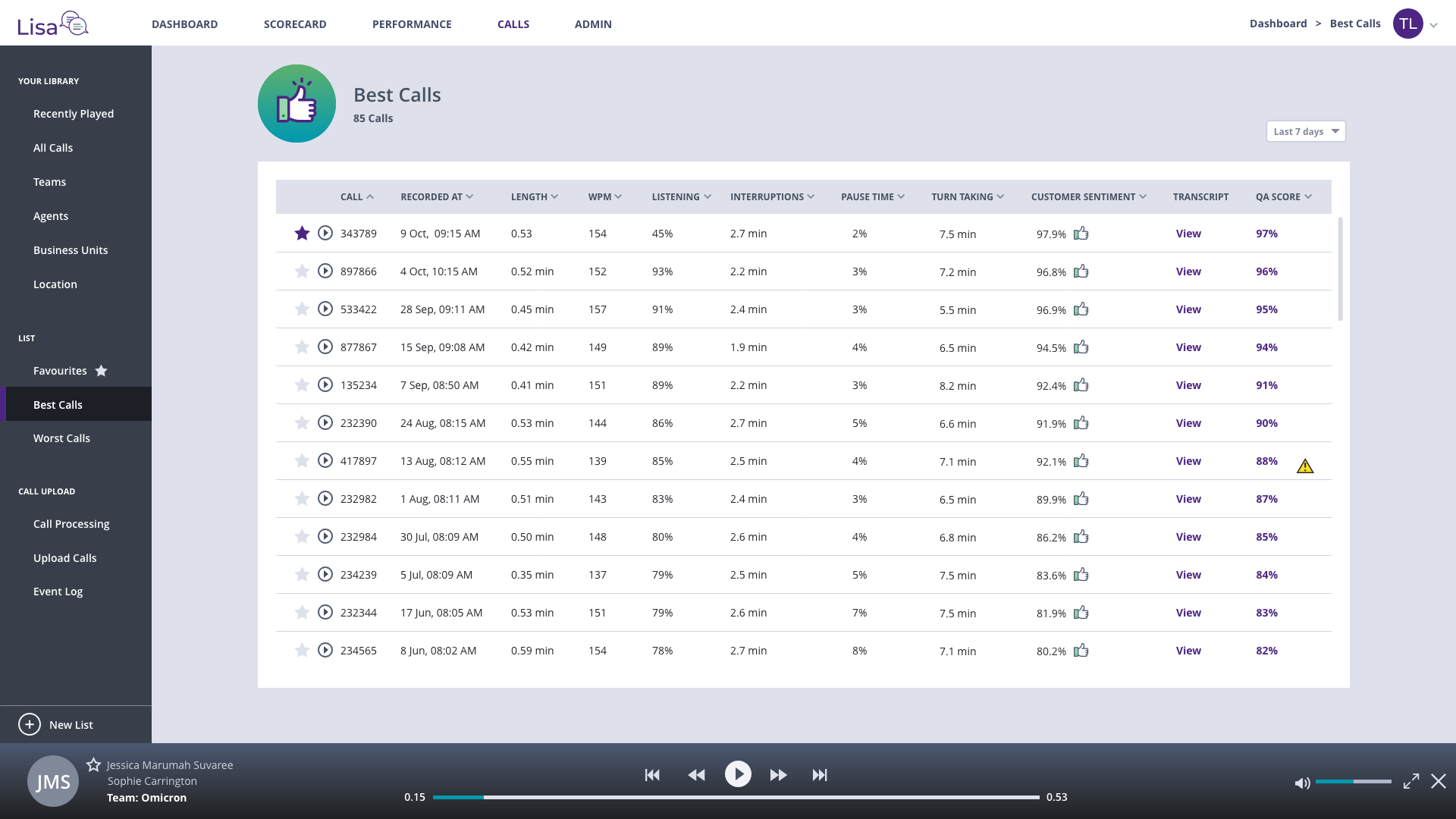

Thomas is a QA Team Leader who manages 8 QA Officers. He ensures his QA team members are following QA evaluation procedures and are meeting their KPIs. To organise calibration training sessions for QA Officers, he looks for the best calls as examples for the whole department to listen to, assess and discuss. The video below showcases how Lisa could help Thomas get to the best calls immediately without having to listen to hundreds of lengthy calls or to ask his team for best examples

Thomas is able to look maximise the audio player to the transcription screen that shows him the detailed analysis of each call with transcription and indicates sections that have to do with Call Agents’ interruptions, overtalk, pauses, silences and sentiment. Thomas sees a set of keywords on the bottom right of the screen and is able to select key performance indicators such as “Active Listening” to go to the relevant sections that he could listen to where the call agent had demonstrated “Active Listening” in her call with the customer. Thomas uses this analysis for discussions with the agents in his team during calibration training sessions and performance reviews with individuals.

Scenario 3: Using call lists to find best/worst calls; to find specific agents’ calls

Thomas wants to view the entire list of the best calls. He does so by clicking on “View list” under “Training Opportunities” on the dashboard. This brings him to the screen “Calls”. On the left panel, he’s able to view different categories such as “Worst Calls” and “Favourites”. This helps him select different calls to be used as examples for training the Call Agents. To narrow down on a number of choices, he would tap on the star icon next to a Call ID so he could access that call in the “Favourites” list at a later time.

Scenario 4: Viewing agents’ scorecards

Thomas wants to use the leaderboard to motivate his team to do better than the other agents and teams. He review the best agent’s scorecard do see where the winning agent, “Jessica” does best at.

Achievements

The product automated compliance processes and optimised agent performance for QA Manager and Officers.

Assisted the users by scoring each call automatically and using Artificial Intelligence to surface insights to QA scoring for each score and provide actionable insights to QA trends and coaching opportunities.

Achieved positive feedback from 90% of Quality Assurance Managers and Officers for improved compliance monitoring and efficiency.

Reduced high-risk behaviors and compliance breaches by 25% through UI of trend and performance reports.

Enabled sales executives and consultants to present the MVP, securing 3 new investors and early adopters